With machine learning the dataset that is used to train the learning program is critical. Feeding a dataset of correct observations is what defines supervised learning. What it means in this project’s context, is that we have a set of log data that is already labeled correctly.

I have found wikipedia to be a good resource for the basic theory, and from the supervised learning article, we’ll go through the general steps needed to create such a model with some thoughts and a small experiment of data processing. I also found a book containing a collection of scientific studies regarding machine learning and cyber security:

1. Define the training set

In the very first prototype, the training set is going to be Linux log messages and their respective logging levels. Thus, the data set is defined as LOGLEVEL:MESSAGE. With supervised learning, the wikipedia article gives a set of issues to consider, of which bias-variance dilemma looks quite interesting. Questions of how to evaluate and define a good dataset have to be answered.

2. Get the training set

We have lots of logs. I wanted to test how to work on the data itself, and created an experimental script to work with systemd-journald generated log data:

$ cat datatest.sh #!/bin/bash sudo journalctl -n 5 -o json --output-fields=PRIORITY,MESSAGE | sed -E 's/"__CURSOR":"[^"]*"//; s/"_BOOT_ID":"[^"]*"//; s/"__MONOTONIC_TIMESTAMP":"[^"]*"//; s/"__REALTIME_TIMESTAMP":"[^"]*"//' > testdataset.json

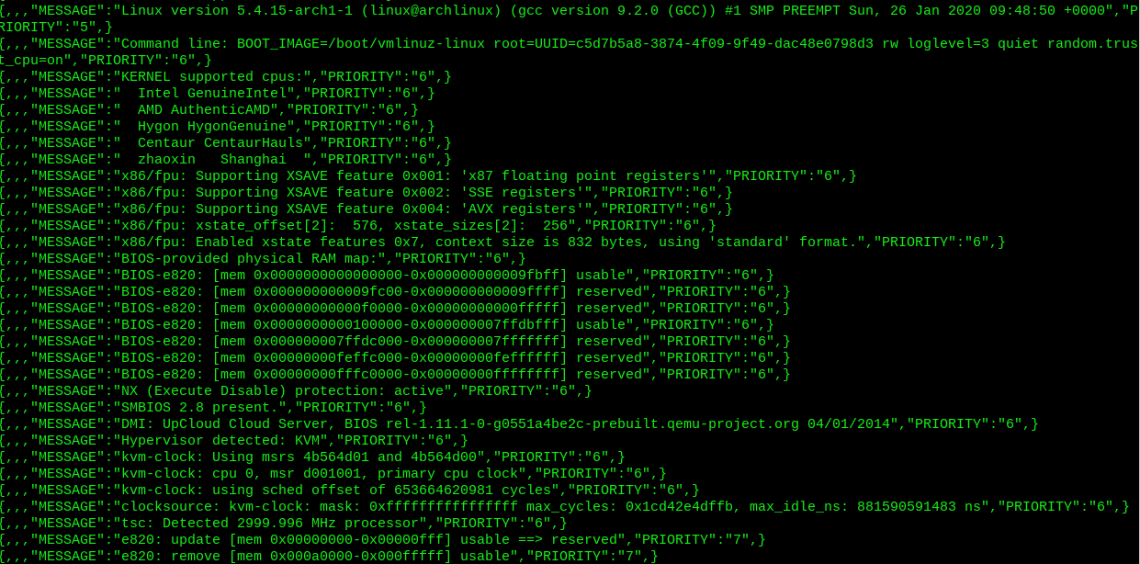

The script parses extra metadata that is included by default journalctl’s json output. The script above runs the parser only for 5 journal lines. I removed the ‘-n 5’ selector, and took a full dump of my test server’s logs:

$ time ./datatest.sh real 0m17.953s user 0m17.192s sys 0m0.607s $ stat testdataset.json File: testdataset.json Size: 78178485 Blocks: 152696 IO Block: 4096 regular file

~80M of parsed logs in 18secs on a simple cloud machine. Efficient, huh? TBH I don’t know. Some further smoothing anyway to be done, I heard CSV is the format… Here is a screenshot how it looks like now:

3. Determinte the input features

The ‘features’ in a machine learning context are essentially different variables on a given input. In step 2 there were only two such variables: priority and the message. This can be of course expanded, but it can also be too much. Key here is to identify relevant features that make most sense in terms of desired output. Looking at the script, the extra data can be easily thrown in with the ‘–output–fields=’ -selector.

4. The structure of the learning function and the algorithms

Choosing the right algorithmic models that can do the job itself. There are many of them, and finding the right one to classify the data is being looked into. Neural networks, decision trees, support vector machines, Bayesian networks… this is where the MATH happens.

5. Train the model

Once the model is complete, the data is fed into the machine, and it learns from it. Data format and volumes have to be considered, as well as the data quality.

6. Evaluate the accuracy

The working piece of machine learning software has to be tested. There are 2 more datasets to make: validation dataset and testing dataset.

The way forward

The initial program is going to perform what is called ‘multiclass classification’. A closer look into the suitable algorithmic models is a priority. There are many options to choose from, but the scope of the first prototype has to be tight so we don’t get overwhelmed with the options. For example the unsupervised learning methods and models can find patterns out of the data, and from this project’s point of view can definetely be looked into. But not just yet…

Project presentation ahead!

We are finalizing the project plan and making it presentable. The project presentation will be held on Wednesday 12th February in Haaga-Helia University of Applied Sciences, 12:00 onwards in classroom 5009. There will be other interesting projects too 🙂