I spent my friday evening playing with the first model, and while it’s certainly not perfect, it’s a start. By experimenting with some of the parameters, I could train the model to achieve about 98% accuracy. On it’s own data. When I made the dataset bigger, the performance degraded.

The whole ‘foodchain’ of the machine learning model has to be considered, and I refactored the code to represent the phases more clearly. What is also very important, is the dataset itself used to training. I also did a preliminary dig on how to produce more meaningful error metrics, as the aforementioned 98% is just a hit/miss ratio.

Initial tunings

The very first version has a 80% success rate, which means the model can’t classify the data it was trained on. The first obvious thing is to increase the epochs in the training phase. An epoch is the data set fed once to the neural network, and the whole process can be time consuming.

model.fit(log_text_data,classes,verbose=2,epochs=100)

The original value was 50, and doubling it doubled the time used to train the model. Next, I played on the word vocabulary size on the tokenizer, but it didn’t seem to have a noticeable effect. What had an effect, was to increase the nodes in the 2 hidden layers to 64 on the model.

model.add(Dense(64, input_dim=log_text_data.shape[1], activation='relu')) model.add(Dense(64, activation='relu'))

Now the model can classify the data it was trained on with 98% accuracy. Using training set as a validation set produces overly optimistic results, so lets not get excited yet.

The data, always the data

The most critical part is the training data. Good quality is key here, and constructing a good training dataset is difficult.

- Splitting the data into training and validation sets for better metrics.

- Log levels 0,1,2,3 and 7 are underrepresented in the whole set.

- Is there other relevant data that could be used to distinguish the log level?

- Does the set shuffling play a role here? The data is pretty ordered…

Natural language processing – tokenizer

The tokenizer is quite a crude one, so a lot of room for improvement here. The method used now is a straight word to number encoding. Some of the options are:

- Better text preprocessing – examine the process: is there data that is not useful?

- NLP techniques: tf-idf, n-grams (word combinations), lstm…

- Tokenizer function parameters usage

- Character-based tokenization, character n-grams

- Even the padding process might make a difference

The model

The so-called hyperparameters are the parameters that are fixed before the model is trained. These have an effect to the time the model takes to train, as in epochs or the complexity and number of the hidden layers. This is a broad field to be trampled…

- Are there other NN layers that could improve the model?

- What are the relevant hyperparameters and on what basis should they be chosen?

Evaluation techniques

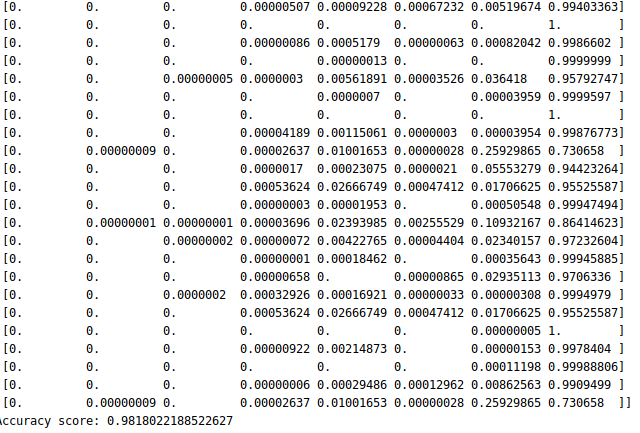

Data from data means statistics. The confidence levels (probabilities) on the classification can already be seen with the prediction function, as we have a softmax function on the final layer of our model:

Each column here presesnts a class (log levels 0 to 7), and the value on the rows is the probability of an entry of beloning to each class. The sample is from the end of the dataset, where the entries belong to the class 7 (last column).

There are many ways to analyze the model and produce meaningful metrics and graphs from the models. For example the model.fit() -method call, which actually trains the model, can be injected with monitoring functions. The techniques of graphical presentations should alse be explored, matplotlib etc.

Conclusion

It has become clear that this simple classification model has it’s limitations, but by evaluating and making the simple model better makes us familiar with the tools used in ML-model evaluation. The live system implementation design could also be started now as we have a working model.

The DeepLog paper describes an approach to look for anomalies by comparing and classifying temporal windows in logs. DeepLog uses Long Short-Term Memory (LSTM) in it’s language sequence processing, among other things.